CERN Prototype

Contents

protoDUNE

Overview

The protoDUNE experimental program is designed to test and validate the technologies and design that will be applied to the construction of the DUNE Far Detector at the Sanford Underground Research Facility (SURF). The protoDUNE detectors will be run in a dedicated beam line at the CERN SPS accelerator complex. The rate and volume of data produced by these detectors will be substantial and will require extensive system design and integration effort.

As of Fall 2015, "protoDUNE" is the official name for the two apparatuses to be used in CERN beam test: single-phase and dual-phase LArTPC detectors. Each received a formal CERN experiment designation:

- NP02 for the dual-phase detector. Yearly report

- NP04 for single-phase detector. Yearly report

The CERN Proposal and the TDR

- protoDUNE CERN proposal: DUNE DocDB 186

- as of summer 2016, the protoDUNE TDR is work in progress and is maintained on github. Contact Tom Junk, Brett Viren or Maxim Potekhin for more detail.

Beam, Experimental Hall and other Infrastructure

- LBNE DocDB 9989: Beam Group presentation 11/18/14.

- Integration status as of early July 2015

- EHN1 Extension Coordination - CERN Neutrino Platfrom Project (sharepoint pages at CERN)

- North Area Extension:installation schedule

Materials and Meetings

- Current series of meetings at CERN - "neutrino platform" and "detector integration"

- Facilities Integration

- protoDUNE measurements/analysis group meetings

- ARCHIVE: CERN Prototype Materials - collection of reference materials and history of this subject

Expected Data Volume and Rates (UNDER CONSTRUCTION)

Important Note

At the time of writing (Summer 2016) this information is still under development and this page is yet to be edited to reflect the most recent methodology and estimates.

Overview

In order to provide the necessary precision for reconstruction of the ionization patterns in the LArTPC, both single-phase and dual-phase designs share the same fundamental characteristics:

- High spatial granularity of readout (e.g. the electrode pattern), and the resulting high channel count

- High digitization frequency (which is essential to ensure a precise position measurement along the drift direction)

Another common factor in both designs is the relatively slow drift velocity of electrons in Liquid Argon, which is of the order of millimeters per microsecond, depending on the drift volume voltage and other parameters. This leads to a substantial readout window (of the order of milliseconds) required to collect all of the ionization in the Liquid Argon volume due the event of interest. Even though the readout times are substantially different in the two designs, the net effect is similar. The high digitization frequency in every channel (as explained above) leads to a considerable amount of data per event. Each event is comparable in size to a high-resolution digital photograph.

There are a few data reduction (compression) techniques that may be applicable to protoDUNE raw data in order to reduce its size. Some of the algorithms are inherently lossy, such as the Zero Suppression algorithm which rejects parts of the digitized waveforms in LArTPC channels according to certain logic (e.g. when the signal is consistently below a predefined threshold for a period of time). There are also lossless compression techniques such as Huffman algorithm and others. At the time of writing it is assumed that only lossless algorithms will be applied to the compression of the protoDUNE raw data.

It is foreseen that the total amount of data to be produced by the protoDUNE detectors will be of the order of a few petabytes (including commissioning runs with cosmic rays). Instantaneous and average data rates in the data transmission chain are expected to be substantial. For these reasons, capturing data streams generated by the protoDUNE DAQ systems, buffering of the data, performing fast QA analysis, and transporting the data to sites external to CERN for processing (e.g. FNAL, BNL, etc.) requires significant resources and adequate planning.

Baseline parameters as per the proposal

This information is presented here mainly for historical reference and to reflect the content of the CERN Proposal.

Initial estimates in this area were developed when preparing the CERN proposal, and under the assumption

of Zero Suppression for the single-phase detector. In this case, both data rate and volume are determined primarily by

the number of tracks due to cosmic ray muons, recorded within the readout window, which is commensurate with the

electron collection time in the TPC (~2ms).

For a quick summary of the data rates, data volume and related requirements see:

A few numbers for the single-phase detector:

- Planned trigger rate: 200Hz

- Instantaneous data rate in DAQ: 1GB/s

- Sustained average: 200MB/s

Based on this, the nominal network bandwidth required to link the DAQ to CERN storage elements is ~2GB/s. This is based on the essential assumption that zero suppression will be used in all measurements. There are considerations for taking some portion of the data in non-zs mode, which would require approximately 20GB/s connectivity. Since WA105 specified this as their requirement, DUNE-PT may be able to obtain a link in this range.

The measurement program is still being updated, the total volume of data to be taken will be ~O(1PB). Brief notes on the statistics can be found in Appendix II of the "Materials" page.

Taking data in non-ZS mode

As of Q2 of 2016, non-ZS mode is considered for implementation in protoDUNE (single phase)

- Plans for Raw Data Management (slides)

- protoDUNE/SP data scenarios (spreadsheet): DUNE DocDB 1086

Calibration

- Main Calibration Page

- Calibration Strategy: presentation by I.Kreslo at the protoDUNE Science Workshop in June 2016

Software and Computing

Overview

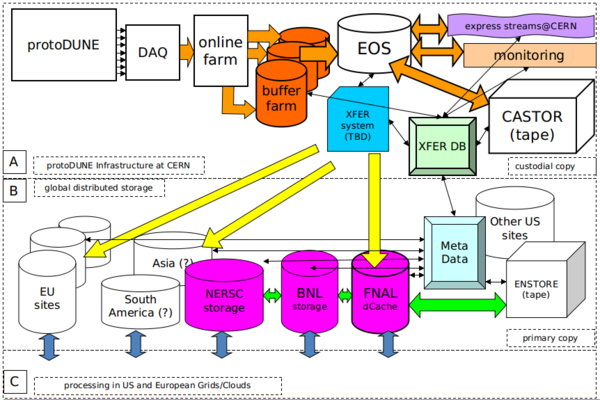

Due to the short time available for data taking, the data to be collected during the experiment is considered "precious" (impossible or hard to reproduce) and redundant storage must be provided for such data. One primary copy would be stored on tape at CERN, another at FNAL and auxiliary copies will be shared between US sites e.g. BNL and NERSC. The aim is to reuse existing software from other experiments to move data between CERN and the US with appropriate degree of automation, error checking and correction, and monitoring.

An effort will be made to implement prompt (near-time) processing and monitoring of data quality, including full tracking in limited express production streams, i.e. on a subset of the data.

DAQ

To-Do list from the DAQ Group

DAQ pages

- General DAQ Info relevant to online/offline interface

- protoDUNE DAQ Topics: list of questions, issues and action items (ported from Giles' link)

Data Handling

Conceptual Diagram of the Data Flow

Requirements and Initial Design Ideas

Initial design ideas (current as of 2016) in the document created during a meeting with FNAL data experts in Mid-March 2016. The main idea is to leverage a few existing systems (F-FTS, SAM etc) in order to satisfy a number of requirements. A few of the initial requirements are documented below:

- Initial requirement for managing the state of data

- Basic Requirements for the protoDUNE Raw Data Mangement System: DUNE DocDB 1209

- Raw Data Management (slides)

Main Design Document

- Design of the protoDUNE Data Mangement System: DUNE DocDB 1212

Online Storage and Online/Offline Interface

Choice of storage solution

A few options a being considered, e.g. a XROOTD cluster vs a SAN (such as iSCSI or FC). The SAN option has the advantage of being available as an appliance, however the information available at the links below demonstrates that there are issues with sharing storage and other items.

XROOTD

Documentation on XROOTD systems at BNL

SAN Technology

iSCSI

- iSCSI Basics <== recommended

- Comments on block level storage and what it means

- Intro to iSCSI

- iSCSI on Linux

- iSCSI Initiator and Target Configuration

- Install and Manage iSCSI Volume (Linux/CentOS /Fedora Linux)

- Setting up LUNs on Linux

- Multiple Hosts for a single iSCSI Target

- Enhancing iSCSI Performance

- Another detailed example of iSCSI setup: mirrored volumes for redundancy

iSCSI emulates a SCSI interface over the network. The client ("initiator" in iSCSI terminology) accesses remote storage as a block device, e.g. an attached disk.

Having multiple initiators accessing same "target" (assuming it's configured with a single partition) is not trivial. This technology is essentially emulation of SCSI and not intended for sharing. It can be done with lots of effort and caveats.

Clients accessing different partitions can be expected to operate normally but of course there is no shared data in this case and there can be network congestion issues.

MC/S means multiple connections per session and it improves scalability and performance of a iSCSI installation. This feature is not supported in the standard part of Linux kernel (the initiator) called Open-iSCSI. There is a commercial software ("core-iSCSI") that does that.

It is important to remember that iSCSI works at the kernel level, whereas storage federation like XRootD is the user space.

Much of the same logic applies to the Fibre Channel technology. It is required that the SAN is equipped with cluster-aware filesystem for sharing to work.

iSCSI targets can have multiple NICs. Configuring those will require some work.

Redundancy can be achieved using LVM mirroring. This is in addition to a different possibility, which is to RAID disks in the iSCSI target which is a common practice in enterprise environments.

Fibre Channel

Fibre Channel Protocol is a network protocol (just like TCP is one). It is often used to transmit SCSI commands via a network in which case it's conceptually very similar to iSCSI. There are a few generations of the FC technology. It can utilize an optical connection (which was the original version that gave this technology its name) and also copper connections.

Storage at CERN

More information (including fairly technical bits) can be found on the CERN Data Handling page.

- EOS is a high-performance distributed disk storage system based on XRootD. It is used by major LHC experiments as the main destination for writing raw data.

- CASTOR is the principal tape storage system at CERN. It does have a built-in disk layer, which was earlier utilized in production and other activities but this is no longer the case since this functionality is handled more efficiently by EOS. For that reason, the disk storage that exists in CASTOR serves as a buffer for I/O and system functions.

Data Transfer: Examples and Reference Materials

- StashCache

- Archiving Scientific Data Outside of the Traditional HEP Domain, Using the Archive Facilities at Fermilab

- CMS FTS user tools

- The NOvA Data Acquisition System

- The DIRAC Data Management System and the Gaudi dataset federation

- STORAGE MANAGEMENT AND ACCESS IN WLHC COMPUTING GRID (a thesis by Flavia Donno)